As discussed in our overview of Oracle BRM Testing, there are several aspects to testing BRM. In this post, we’ll be focusing on the Front-End aspect of testing. Typically, this is the interface that is used internally by the Business Configuration Center to manage the customer accounts, product upgrades, billing information corrections, etc. It is also the interface that is exposed to external customers who use the Customer Center to view their account, change passwords and access billing information.

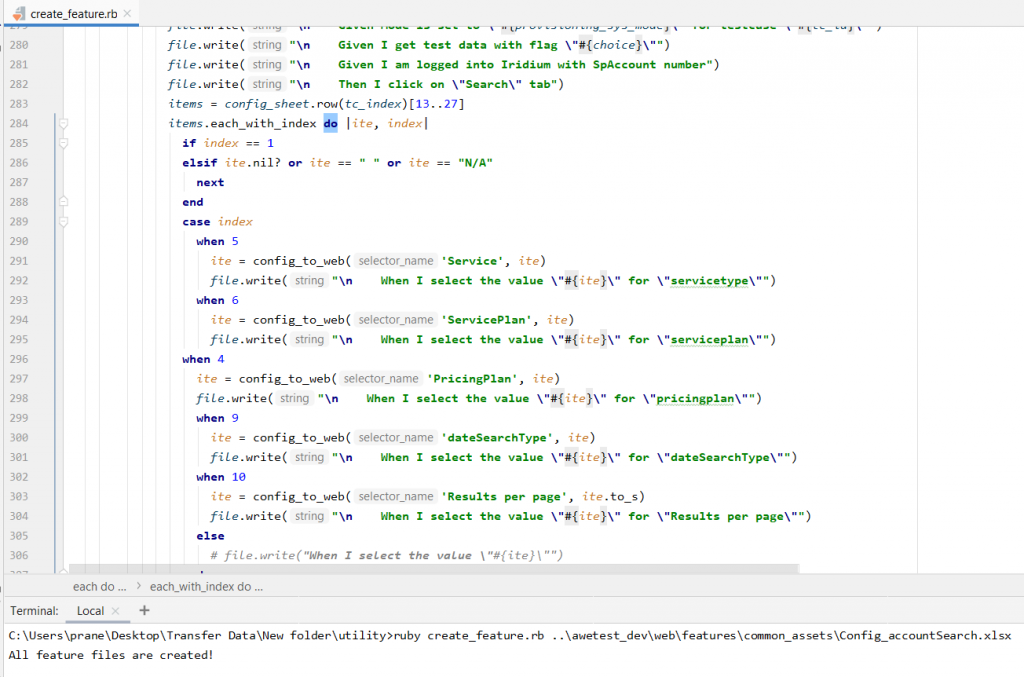

To test the BRM UI interface, our typical approach has been a hybrid framework (which is built using Ruby/Selenium/Cucumber) to manage several thousand scenarios that we’ve automated for our customers. Customers can use the BRM’s inbuilt UI or they can build their own custom UI. Our testing approach is efficient for any UI interface. The tests are completely data-driven, and we have leveraged Microsoft Excel to manage the test data. We have created Ruby utilities to automatically generate Cucumber BDD test scripts based on excel test data. Below is a sample utility file which creates all the feature files for our respective modules:

It would take about 650 hours to manually write ~7,500 tests, which would take our Ruby utilities hardly a few minutes to automatically generate. The timeframe to build such a framework is typically around 40 hours. Below is a screenshot showing how the test data is managed:

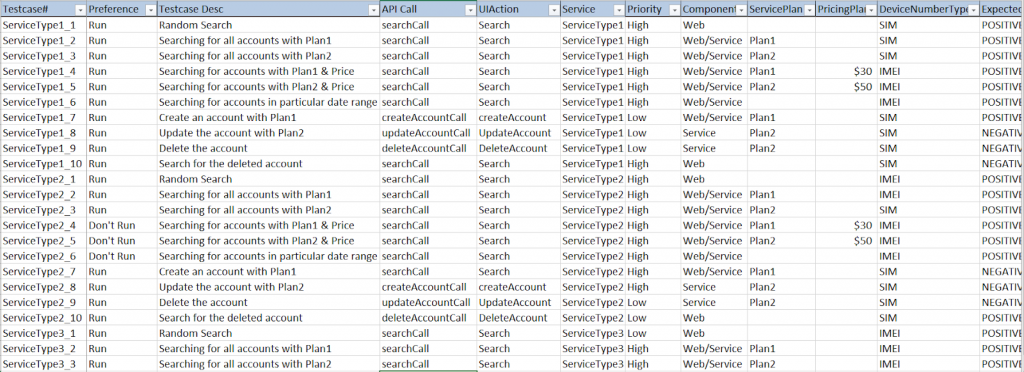

All the scripts that we tested for UI were also tested using the same scenario for web services. Both the browser and the service tests can be managed in a single excel sheet along with the expected and actual results (for both the positive and negative tests). Some of the columns we leveraged in our testing are:

| Column Name | Description |

| Testcase# | Testcase ID or Scenarios ID |

| Preference | Run or Don’t Run a test case |

| Testcase Desc | A brief description of what the test does |

| API Call | Service call for that scenario |

| UIAction | Action to be done on Web browser i.e, searching/creating account/updating account/deleting account |

| Service | Name of the Service Type – Telephone/Internet |

| Priority | Priority of the test |

| Component | If it is run on Web/Service/Both |

| ServicePlan | Combination of services (Telephone/Internet) |

| PricingPlan | Price for each plan |

| DeviceNumberType | Searching using SIM/IMEI numbers associated with the device |

| ExpectedResult | If it is a positive test or negative test |

Since the browser interface is visually and functionally different for each organization, we built custom functional steps and reusable step-definitions such as open browser, read excel data, log-in to the portal, etc., which increases code re-usability (Do not Repeat Yourself concept of Ruby). The test suite includes smoke tests, regression tests, sanity tests covering tests on product plans, pricing plans, customer accounts.

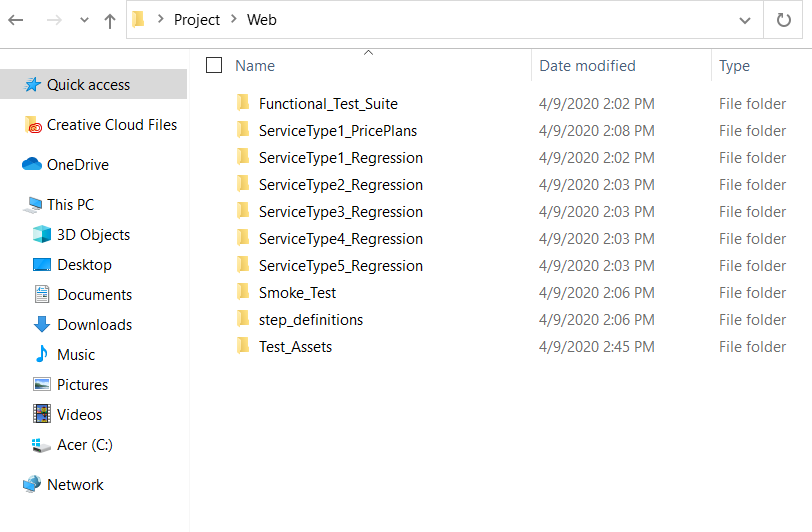

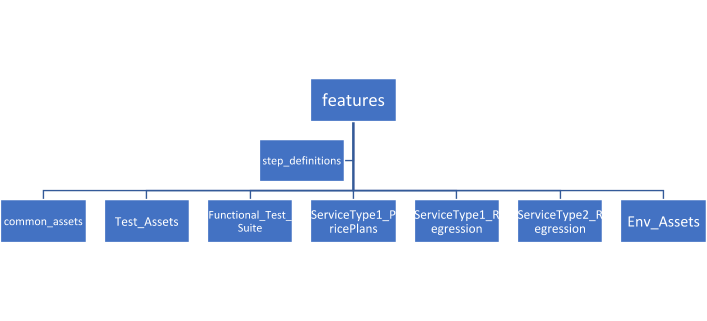

The test folder structure is shown below:

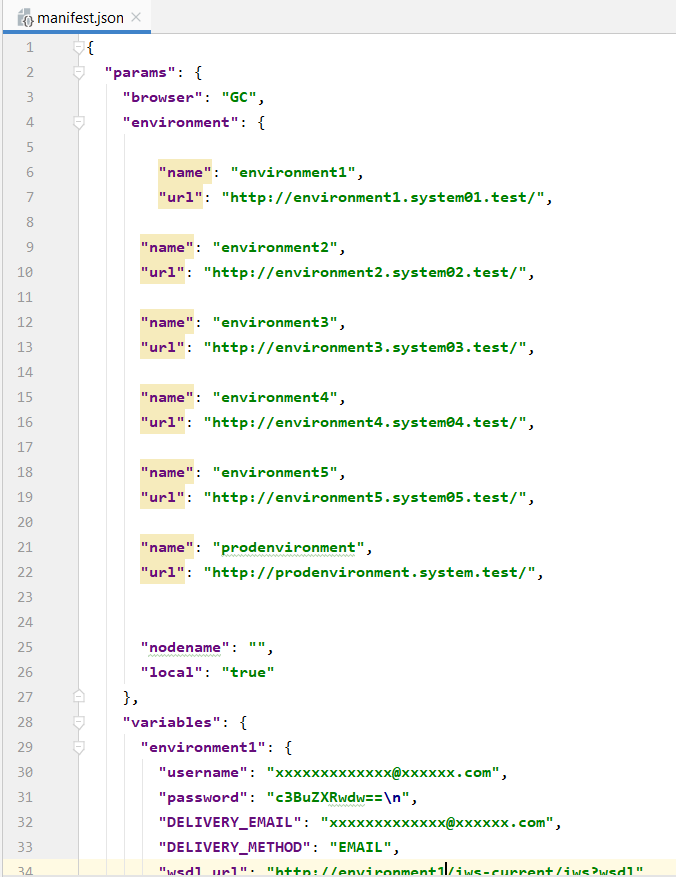

All the Cucumber BDD feature files are in the features folder (which includes Functional_Test_Suite, ServiceType1_PricePlans, ServiceType1_Regression, etc.,) and the framework related files (files built using Ruby/Selenium) are in the step_definitions folder. The common_assets folder has all the test data files which are commonly used for Web browser and API. Test_Assets folder has files which are exclusively used for Web browser testing. Env_Assets folder has files which contain information regarding different test environments and credentials which are usually in JSON format. Sample file for Env_Assets is as shown below:

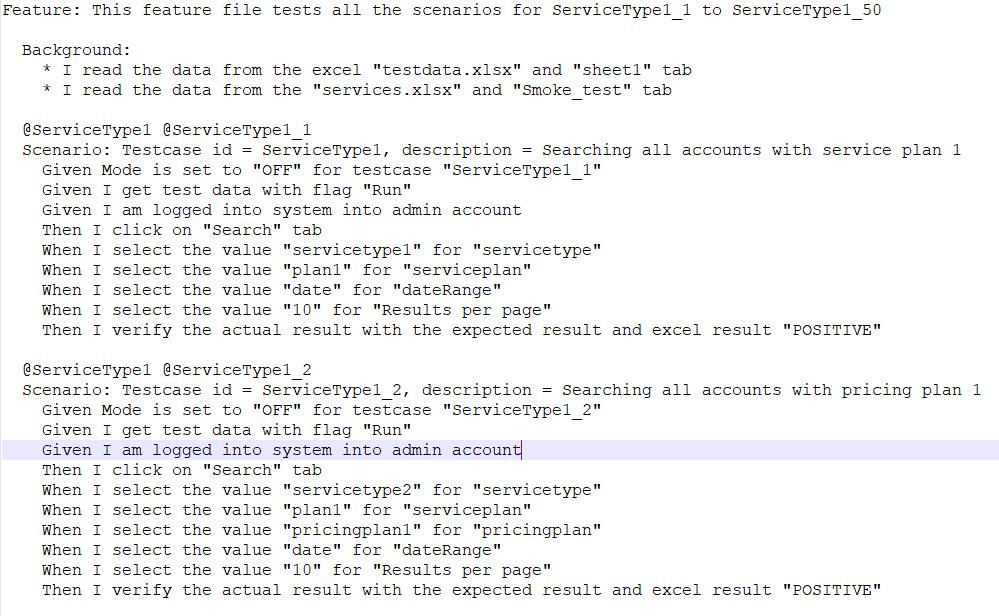

The cucumber scripts are written in the following way, which can easily be read and understood by any non-technical stakeholder. Here is a regression text example:

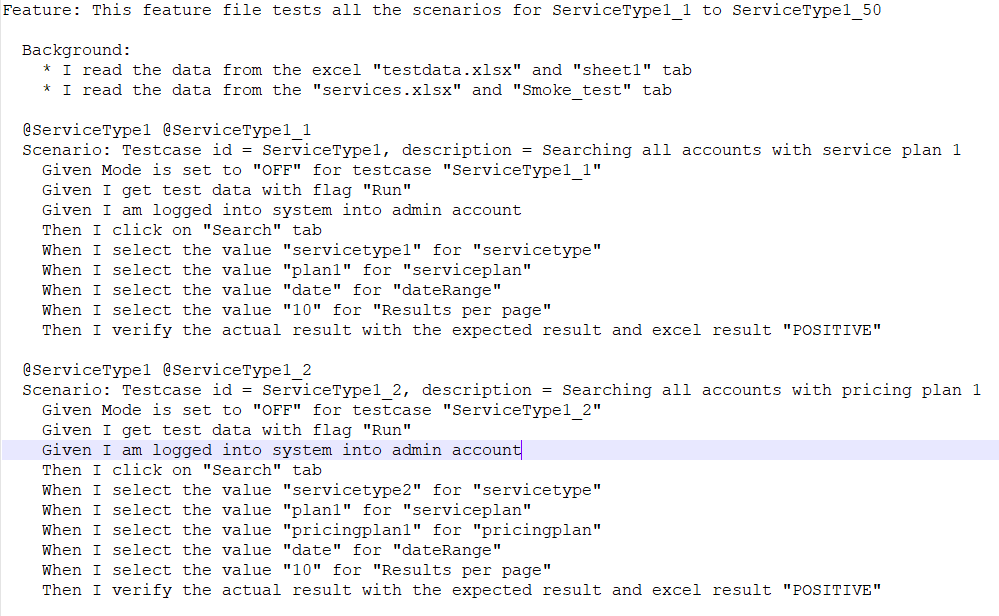

Here is a concise version of the Functional test given below:

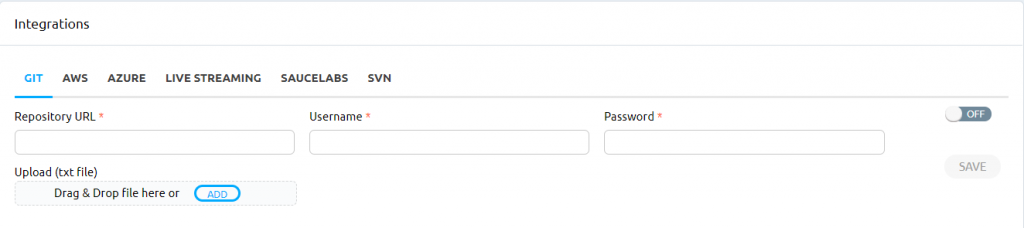

We have employed Git and SVN to maintain the automation framework, test data, and test scripts.

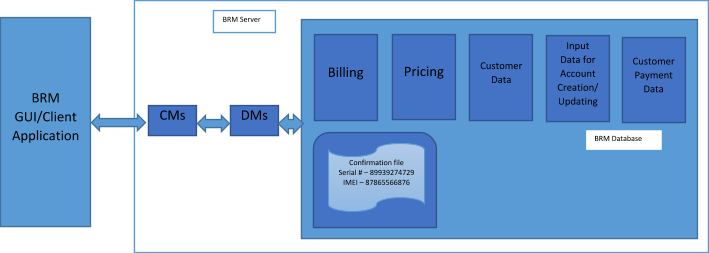

Creating, updating, and deleting customer accounts, which are some of the common operations performed in BRM, are included in the test suite. Dynamically generated device numbers (including Serial Number and IMEI numbers) must be used to create a customer account. The device numbers are stored in the BRM database. A connection to the Oracle database was created by using the ActiveRecord gem and JDBC connection. Running the necessary queries, we retrieved the device numbers. As a next step, we created accounts by using the new device numbers.

Each time an account is created, a confirmation file(typically a XML file) is generated and saved in the BRM server for reference. Using Net::SSH library, the file is accessed, parsed and read to make sure the precise device numbers exist in the file. Not just on creation, but on updating and deleting the accounts a file gets created and is saved in the BRM database.

All the scripts are moved to Awetest (a brief description on Awetest is given in our earlier post, Oracle BRM Testing) and are run on different browsers via different VMs. It will be cumbersome to move each file to Awetest, which is why Awetest is integrated with some of the common version control and cloud services as shown below. Using this integration, it is very easy to move all assets and test data to Awetest with just one click.

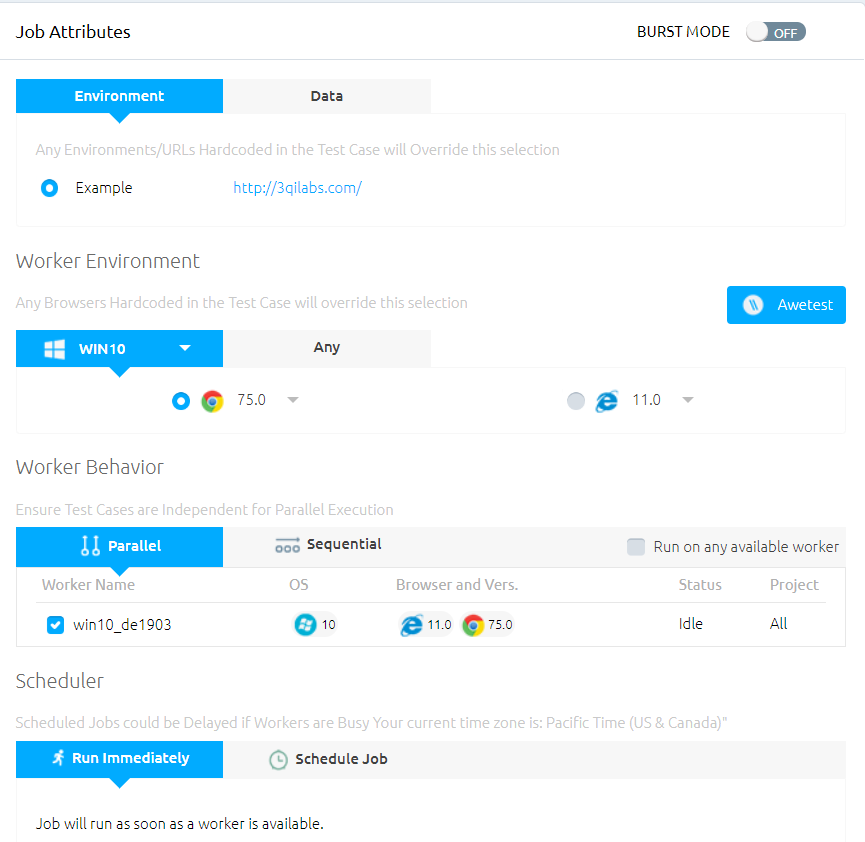

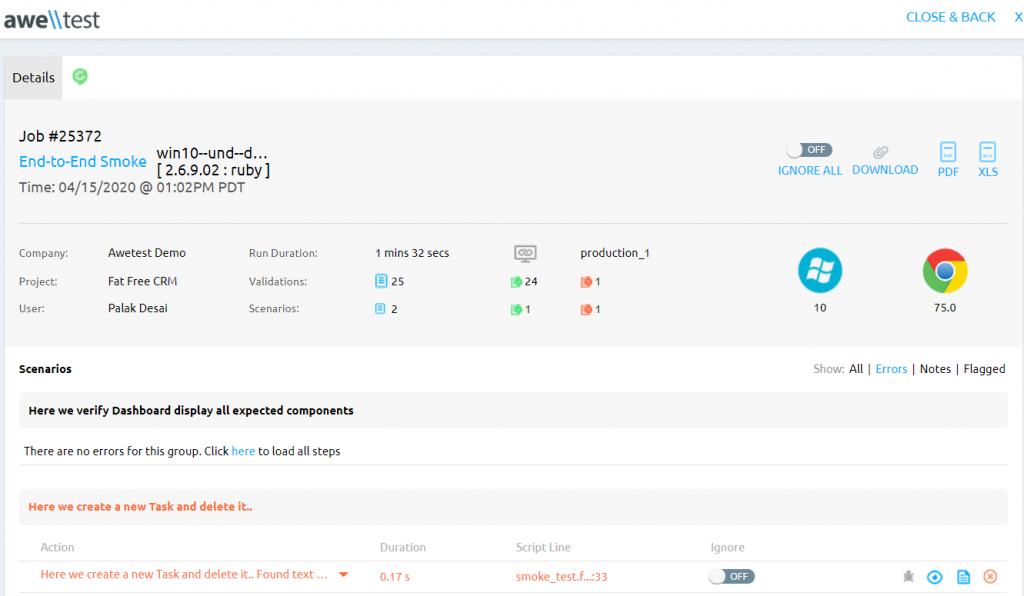

By using any combination of required tests, specific tests within chosen environments and any browser (including different browser versions), we can run different tests in Awetest and analyze various reports as indicated below

Tests can be run immediately or scheduled to run later as well. A detailed report on the passed and failed tests along with screenshots is generated.

Using our hybrid framework, BRM GUIs can be easily tested, and the defects are detected and logged in JIRA – we were able to capture images in case of any error. Any new change in the application is easily updated in the test scripts, and the utility files help to reduce time expended in writing the test scripts by automatically creating them.